The agent is brilliant. The output is wrong.

Every team using AI coding tools hits the same wall in their first month. The capability is real. The agents are brilliant. And somehow, the output is still wrong.

Every team I've talked to in the last six months hits the same wall in their first month of using AI coding tools.

Cursor ships a feature in twenty minutes that would have taken three days. Claude Code reads the entire codebase and proposes a refactor that's almost right. Devin runs autonomously overnight and produces a PR that passes CI on the first try. The capability is real. The agents are brilliant.

Why it still feels wrong

And somehow, the output is still wrong.

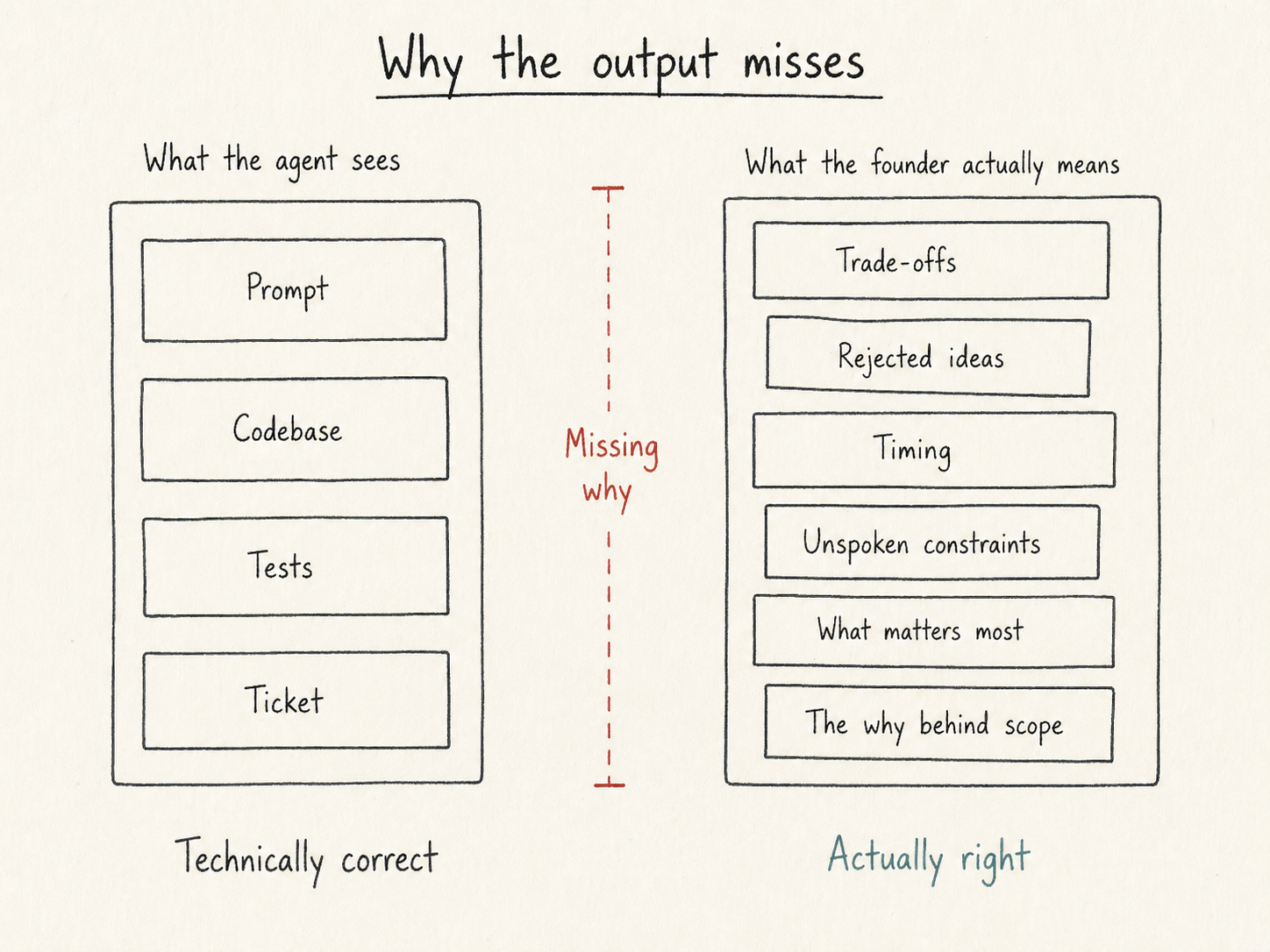

Not wrong in a way that fails CI. Wrong in a more uncomfortable way. The code works. The tests pass. The PR is technically defensible. It just isn't what the founder meant. The agent made twenty small judgment calls along the way, about scope, about edge cases, about what to deprioritize, and every one of them was reasonable. None of them were the founder's. They don't recognize their own product in the result.

This is the part of agentic coding nobody is talking about because it's awkward. The natural response when your tools are this powerful and the output is still wrong is to assume you're doing something wrong. Maybe the prompt was unclear. Maybe you should have specced it more carefully. Maybe you need to learn to write better requirements. So you spend three days writing a perfect spec for the next feature, and the agent ships something almost right but not quite, and the doubt compounds.

The doubt is misplaced. The bottleneck isn't your prompts. It's not the model either. It's something more structural.

What the agent is missing

The agent doesn't know why. It knows what you said. It doesn't know what you meant, what you considered, what you rejected, what constraint you mentioned in a Slack thread three weeks ago that's still load-bearing. None of that context exists anywhere the agent can reach. So it guesses. The guesses are reasonable, and they're wrong.

I spent thirteen years inside other people's companies watching this exact pattern with humans. Founders had intent. Engineers had specs. The gap between them was where weeks went to die. The way teams closed the gap was the same everywhere. Multiple meetings, written-down decisions, painful alignment work. Nobody loved it. It was the cost of building software with people who didn't share your head.

Agents have the same problem with worse symptoms. They don't ask clarifying questions. They don't push back. They don't sit in a meeting and absorb the unspoken context. They take what you said, run with it, and produce technically correct work that misses the point.

What needs to persist

The fix isn't more autonomy. It isn't better prompts either. It's making the why persistent, structured, and queryable. So the next agent that picks up your work doesn't start from scratch. So the trade-offs you considered last week are still in the room when you build this week. So the constraint you mentioned once doesn't have to be re-explained every session.

This is what I'm building Kommit for. Not as a spec tool. There are plenty of those, and they don't solve this. As a substrate. The decisions you make get captured, structured, and made present every time an agent picks up the work. The next session starts from a smarter place than the last one, because the place itself remembers.

Why this matters now

The teams that figure this out in 2026 are going to ship software that feels like theirs, even when most of it is written by agents. The teams that don't are going to keep producing technically defensible, almost-right work that quietly erodes their conviction in their own product.

The agent is brilliant. The output is wrong.

The bottleneck moved upstream. Most of the industry hasn't caught up.

Stephan Moerman